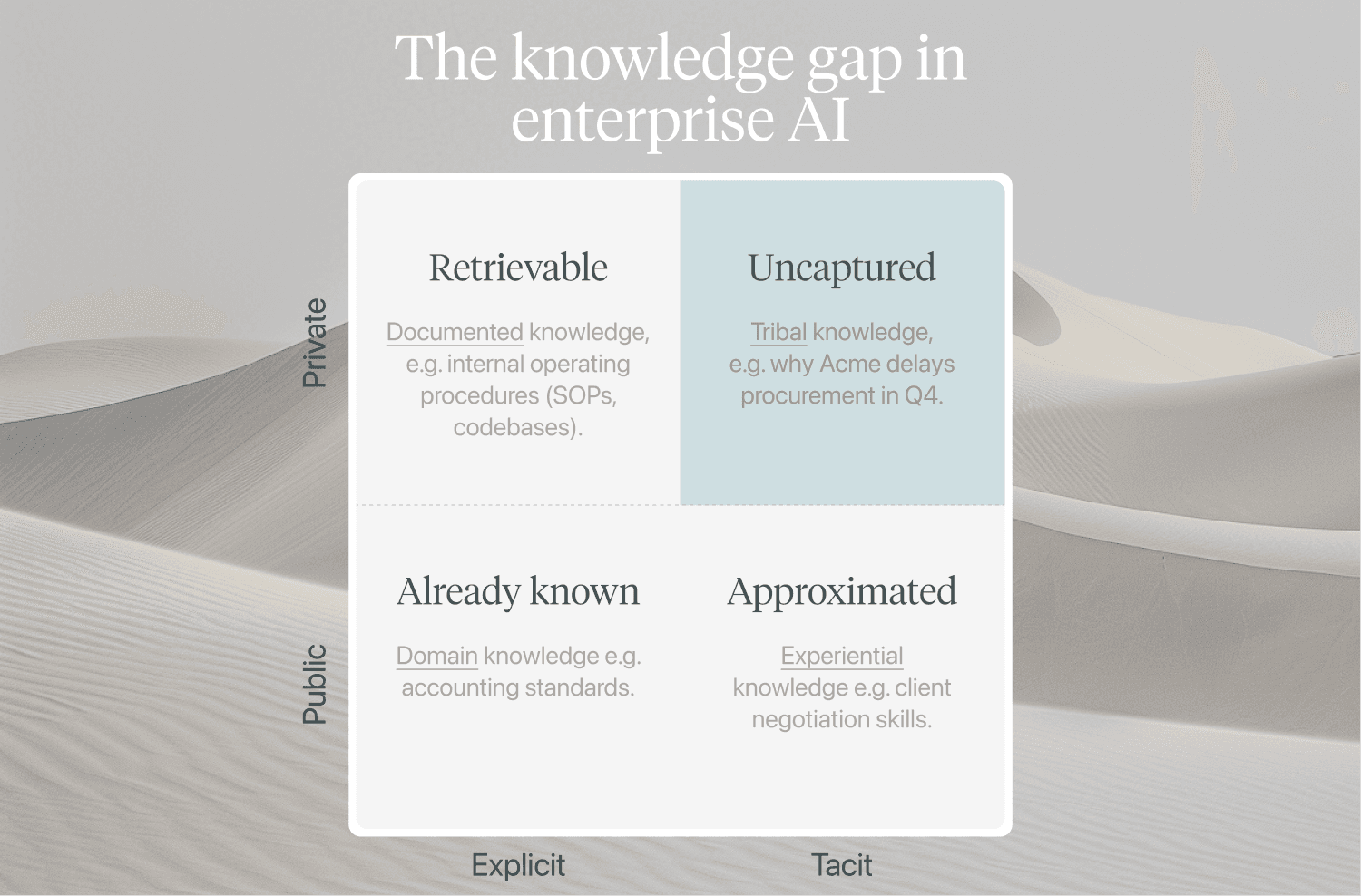

The knowledge gap in enterprise AI

Context windows don't solve on-the-job learning when the knowledge was never written down. A taxonomy of enterprise knowledge, and how agents can capture the tacit parts.

In the latest Dwarkesh pod, Dario Amodei says "context window solves on-the-job learning". This is true in domains where agents can infer tacit knowledge from existing artefacts (e.g. a codebase), but most domains don't have this luxury. Knowledge is learned on the job and only partially documented. So how do you capture this tribal knowledge as context for agents?

For an agent to work within an enterprise they need:

- Domain knowledge: Explicit and public. Already known by LLMs.

- Experiential knowledge: Tacit and public. Approximated by LLMs.

- Organisational knowledge: Explicit and private. Retrievable internally e.g. via RAG.

- Institutional knowledge: Tacit and private. Uncaptured.

Institutional knowledge is especially important because it contains the high-signal nuances that inform judgment-calls. It's the knowledge you lose when an employee leaves the business — why we always make a certain exception for a customer.

A few ideas for how to synthesise tribal knowledge and make it accessible to agents.

How can AI agents self-learn tribal knowledge?

Interview internal experts (with AI)

ListenLabs uses AI for market research interviews. So why not use AI interviewers to document internal knowledge from experts? Every conversation should be anchored in a real event (e.g. "walk me through how you handled this dispute yesterday"). The more recent the episode, the better. The resulting data can be handed over to agents as context.

Watch people work

Watch operators do their work and infer institutional knowledge from real behaviour (e.g. via screen recordings). Observe how internal experts operate systems, draft documents, communicate with colleagues and make decisions. When an operator skips a step or deviates from an SOP, it's recorded. Mercor etc are already doing this.

Capture reasoning behind past exception cases

Mine historical data and processes for ambiguous exception cases and let internal experts label them. The resulting data is internal, company-specific knowledge that was previously undocumented and can now be used as context for agents.

Let operators train internal agents

Every process has exceptions. Most teams operate somewhere between pragmatism and doing things by the book. Existing systems of record capture the outcome but not the reasoning behind them. Once agents are in the execution path of a process, every approved exception can be used a future signal.

Self-learning from human operator touch points comes closest to Dario's self-learning, on the job agents.